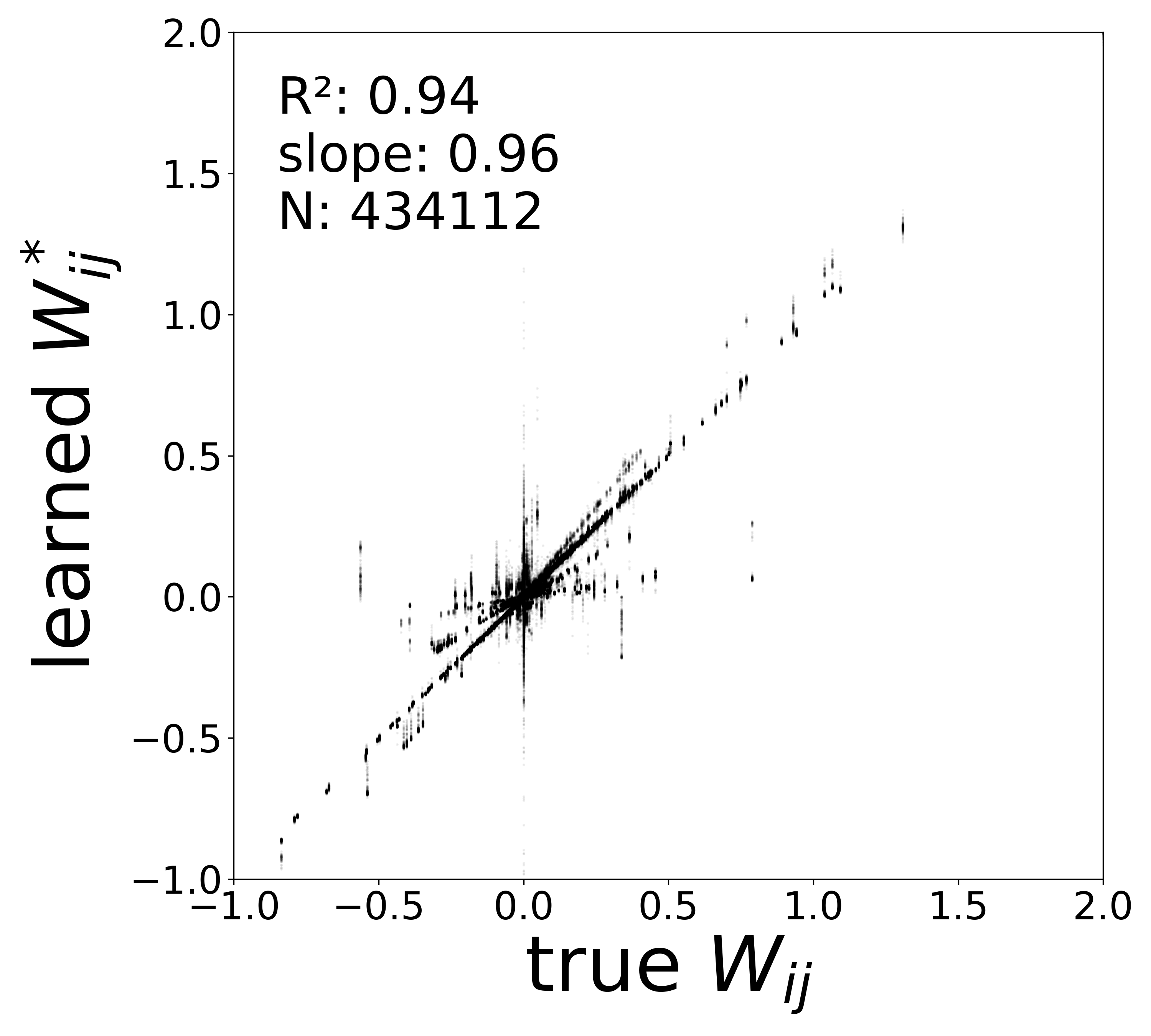

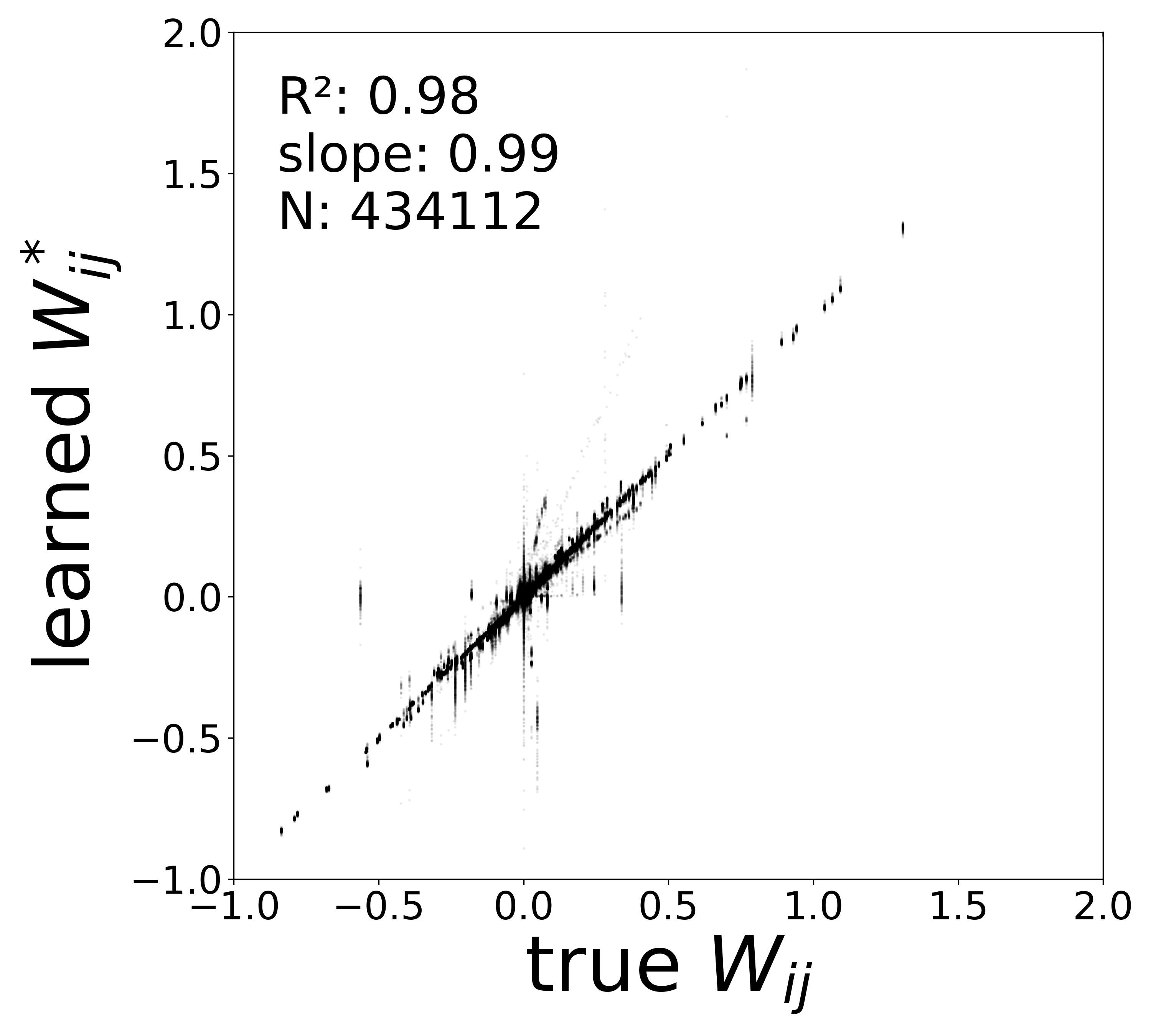

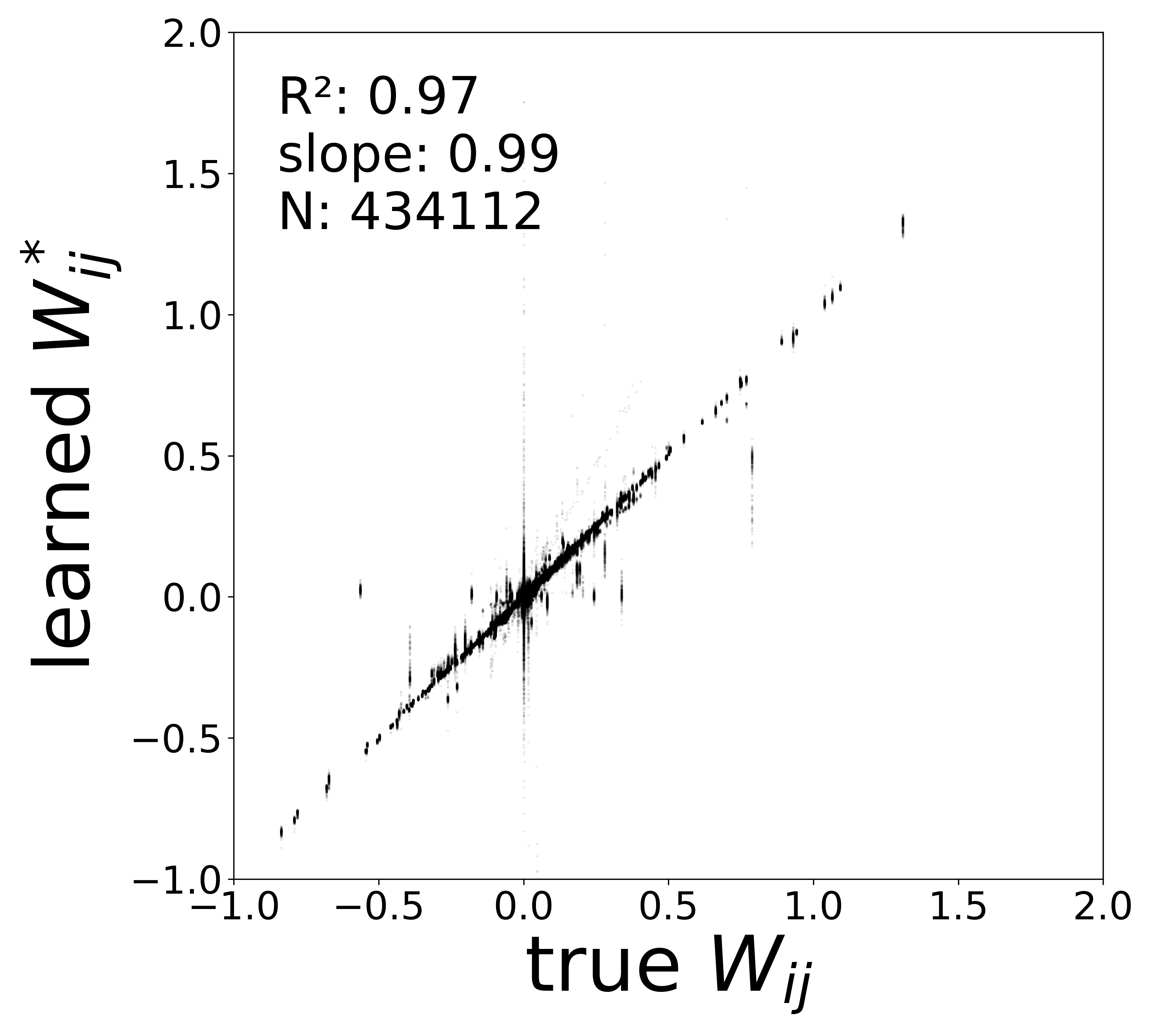

| Metric | 100% | 200% | 400% |

|---|---|---|---|

| \(W\) corrected \(R^2\) | 0.9366 | 0.9772 | 0.9703 |

| \(W\) corrected slope | 0.9593 | 0.9946 | 0.9863 |

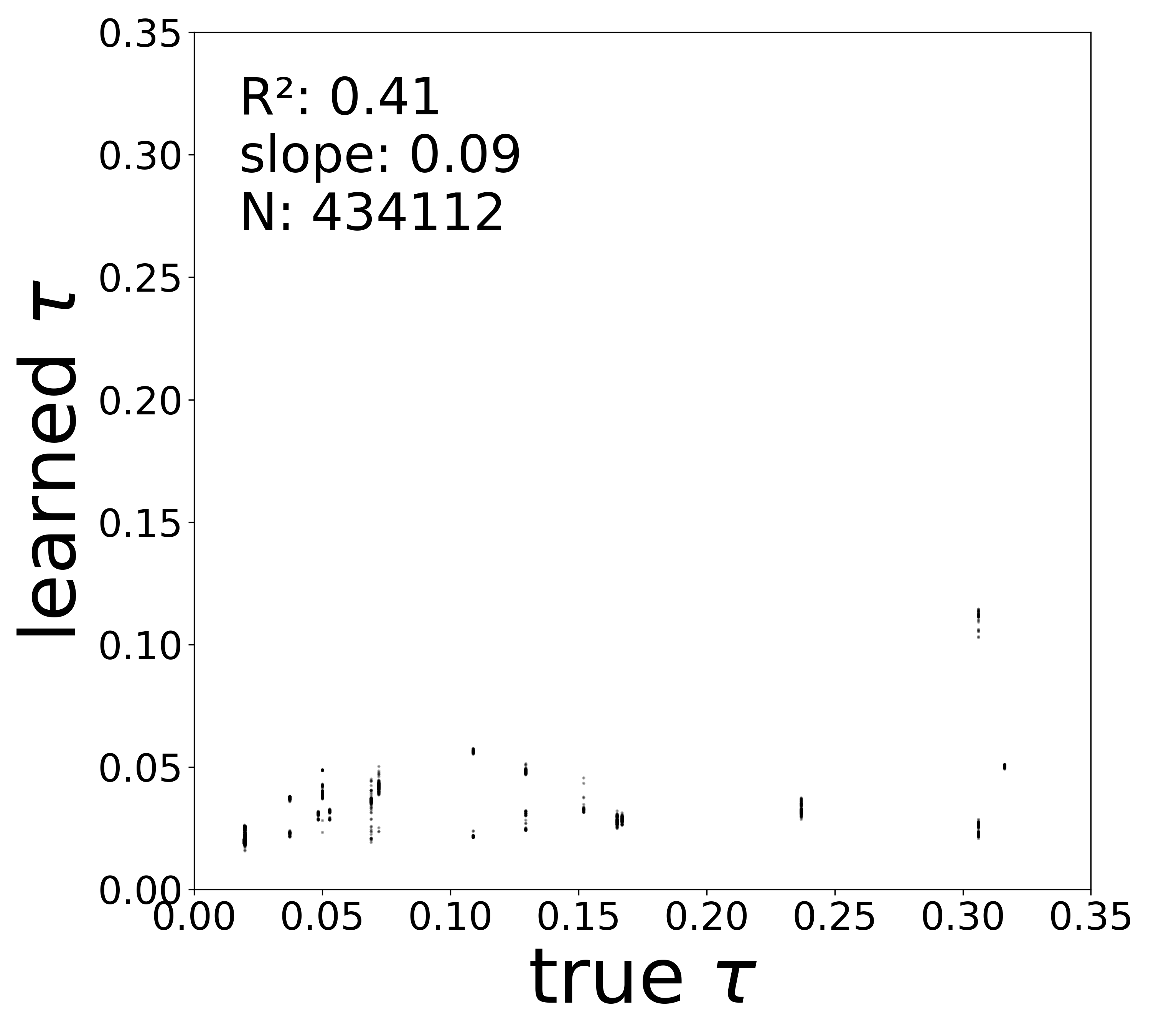

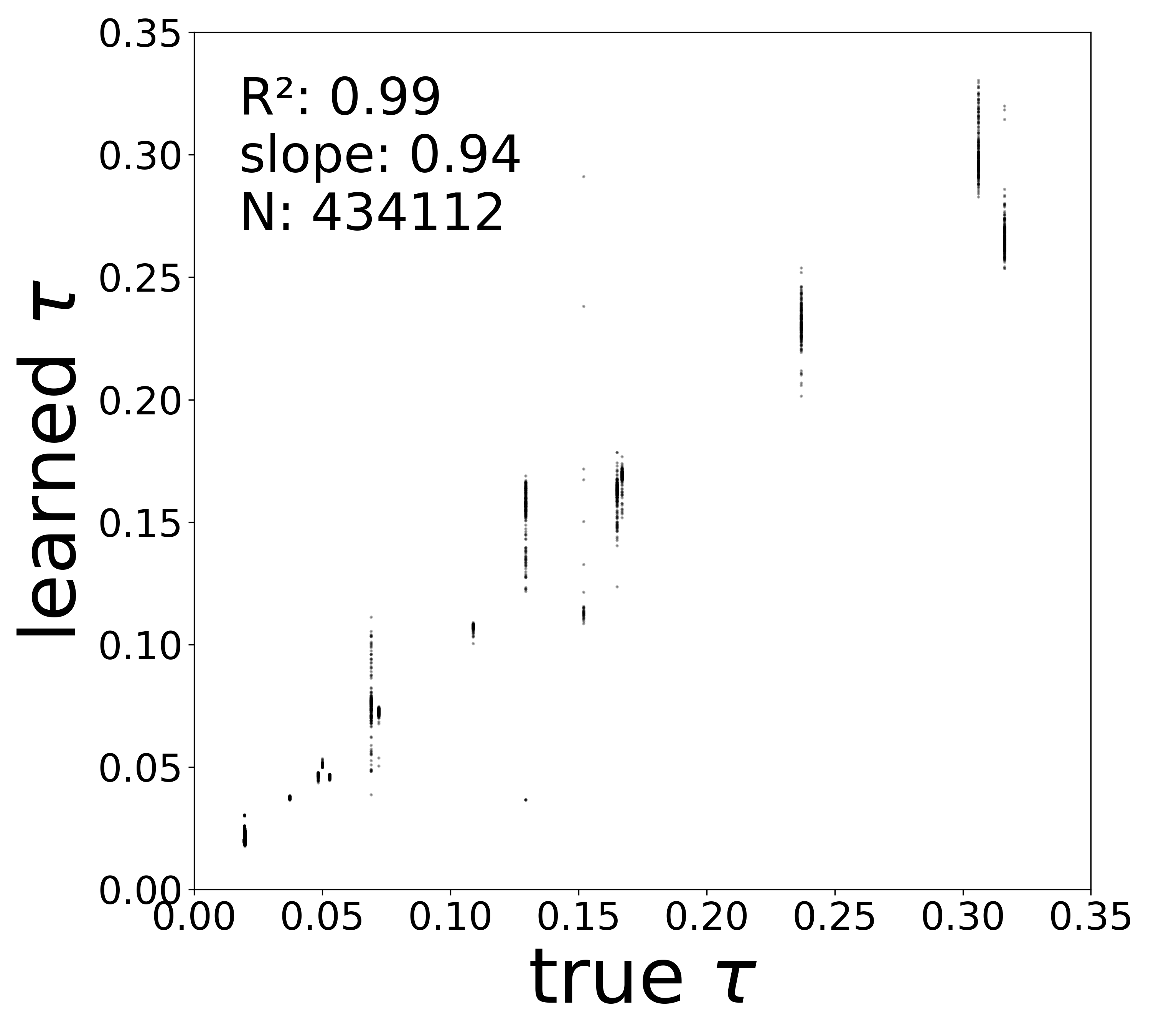

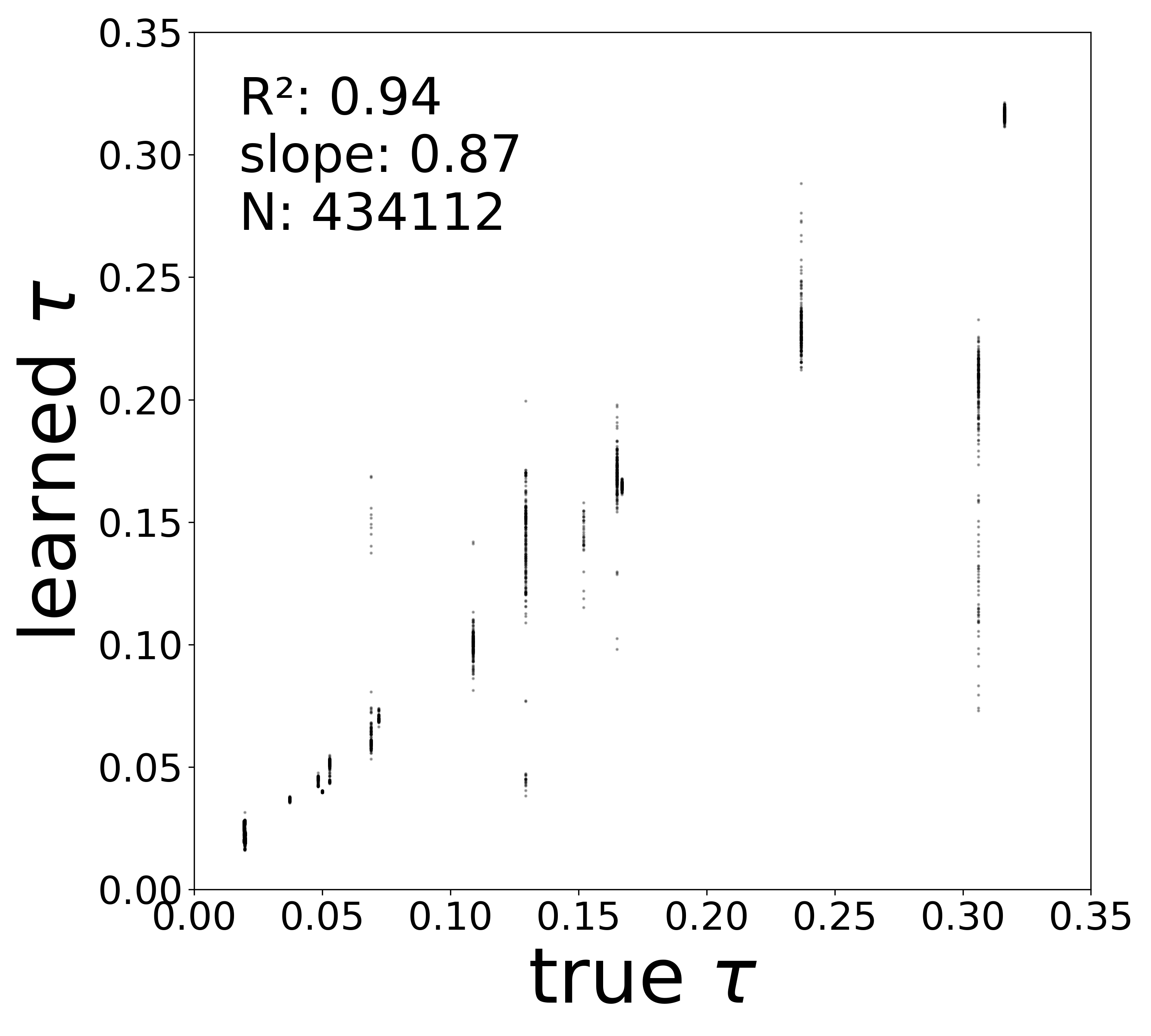

| \(\tau\) \(R^2\) | 0.4097 | 0.9852 | 0.9381 |

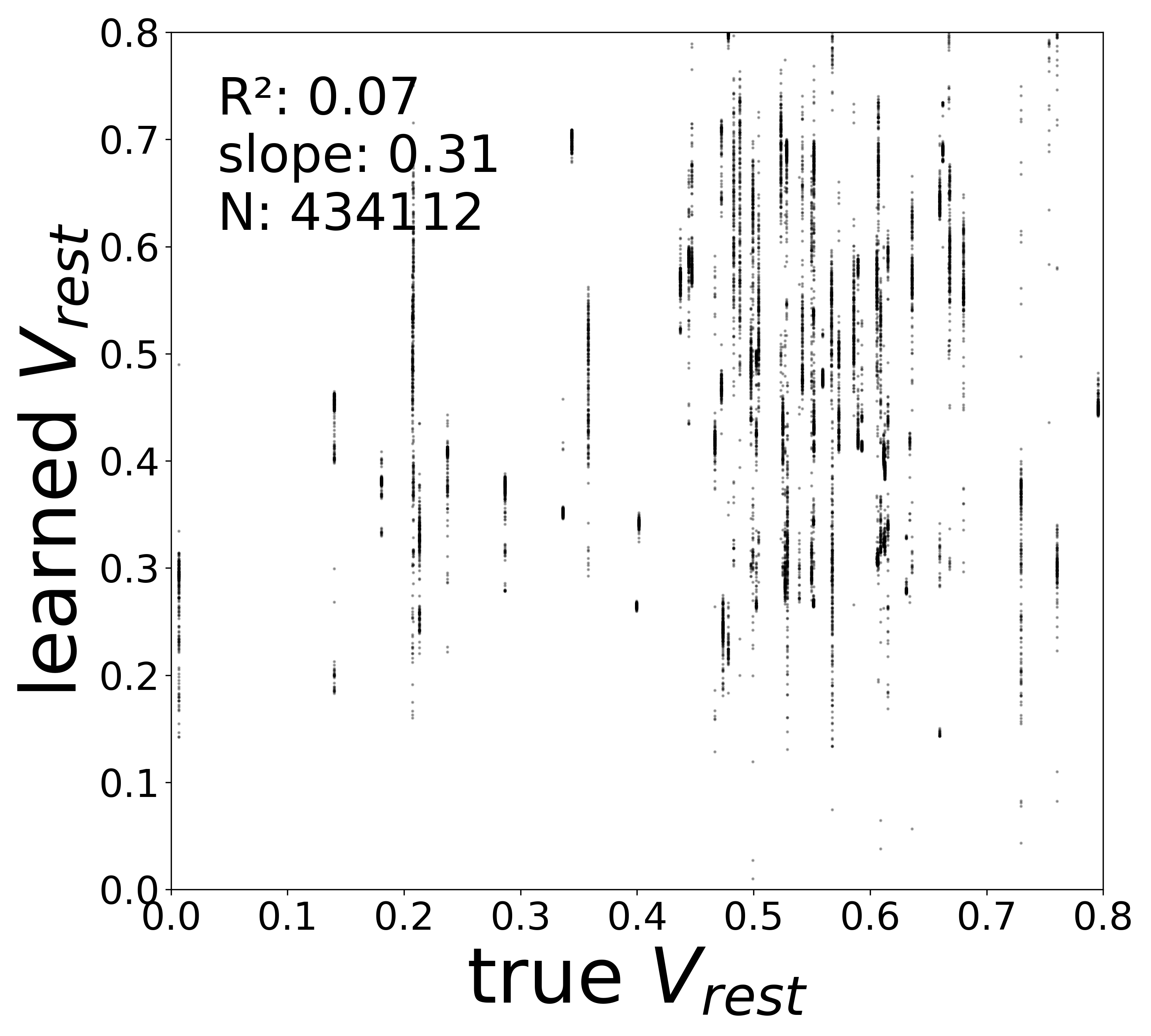

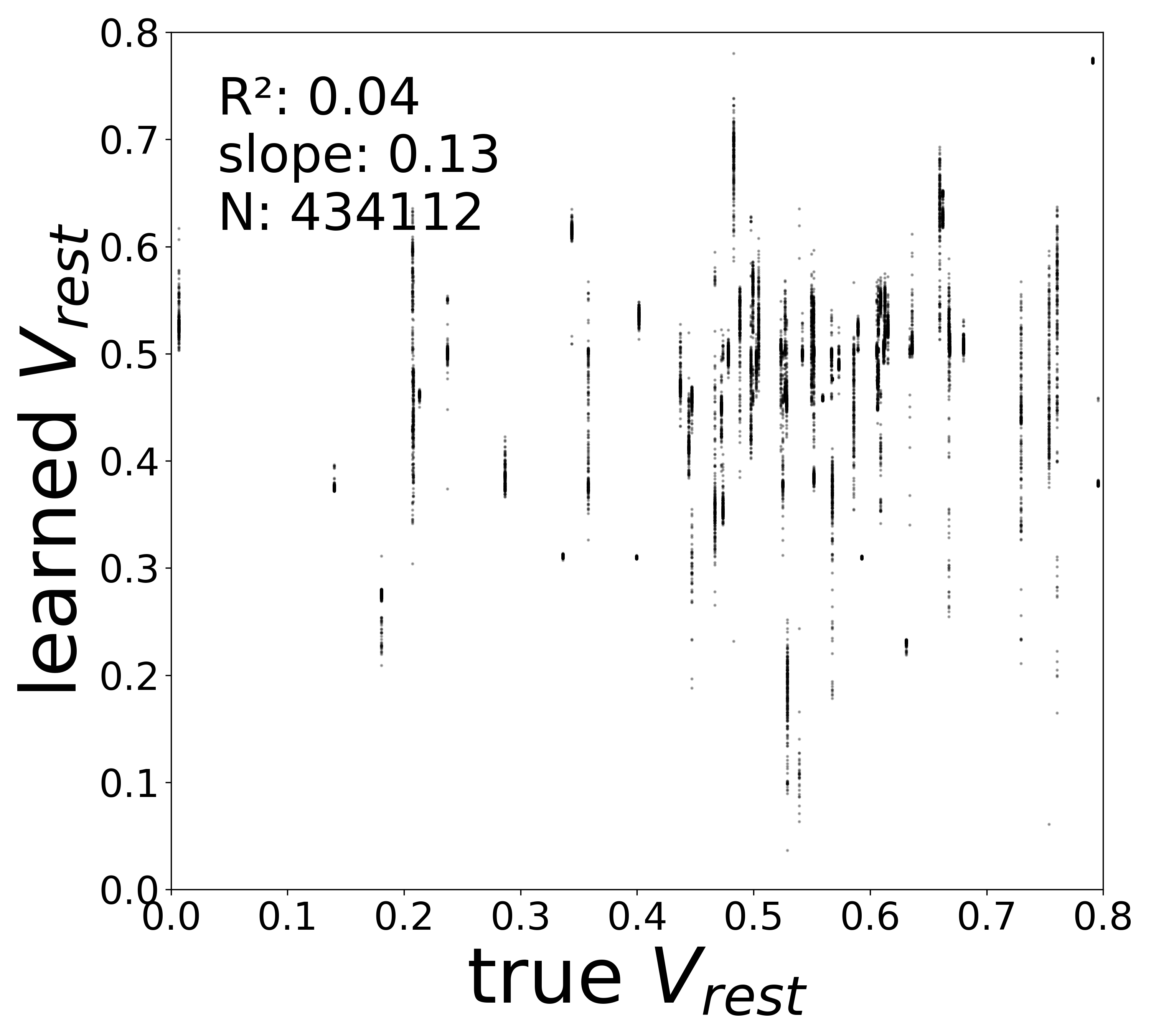

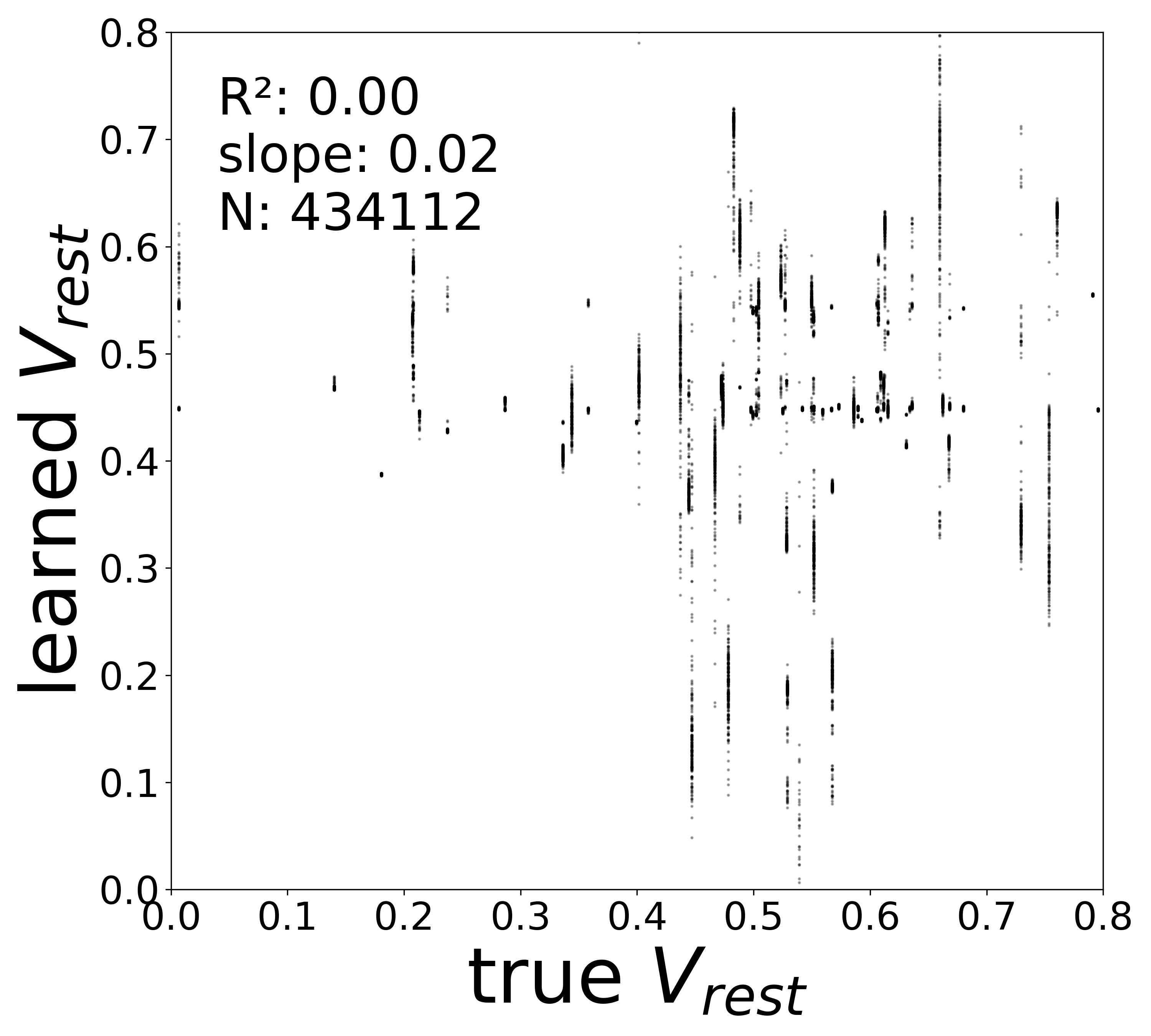

| \(V^{\text{rest}}\) \(R^2\) | 0.0690 | 0.0395 | 0.0007 |

Robustness to Extra Null Edges

Robustness to Extra Null Edges

In a real experimental setting, the connectome may contain false positives: spurious synaptic connections that do not carry functional weight. To test robustness to such noise in the adjacency matrix, we augmented the true connectome (434,112 edges) with random null edges — connections between randomly chosen neuron pairs that carry zero true weight. The GNN must learn to assign near-zero weights to these null edges while still recovering the true synaptic structure.

We tested three levels of null-edge contamination:

| Config | Extra null edges | Total edges | Ratio |

|---|---|---|---|

flyvis_noise_005_null_edges_100 |

434,112 | 868,224 | 1:1 (100%) |

flyvis_noise_005_null_edges_200 |

868,224 | 1,302,336 | 2:1 (200%) |

flyvis_noise_005_null_edges_400 |

1,736,448 | 2,170,560 | 4:1 (400%) |

Noise Level

Recall that the simulated dynamics include an intrinsic noise term \(\sigma\,\xi_i(t)\) where \(\xi_i(t) \sim \mathcal{N}(0,1)\) (Notebook 00). All null-edge experiments presented here use a fixed noise level of \(\sigma = 0.05\) (low noise). To change the noise level, edit the noise_model_level field in the respective config files:

config/fly/flyvis_noise_005_null_edges_100.yamlconfig/fly/flyvis_noise_005_null_edges_200.yamlconfig/fly/flyvis_noise_005_null_edges_400.yaml

Results

Each config extends the base flyvis_noise_005 setup with a different number of extra null edges injected into the adjacency matrix. The null edges are sampled uniformly at random among neuron pairs not already connected. The GNN architecture and training hyperparameters are otherwise identical across conditions.

The GNN is remarkably robust to null-edge contamination: even with 4x as many spurious edges as real ones, it recovers synaptic weights, biophysical parameters, and neuron-type identity with only modest degradation. The model effectively learns to assign near-zero weights to the null edges while preserving the true synaptic structure.

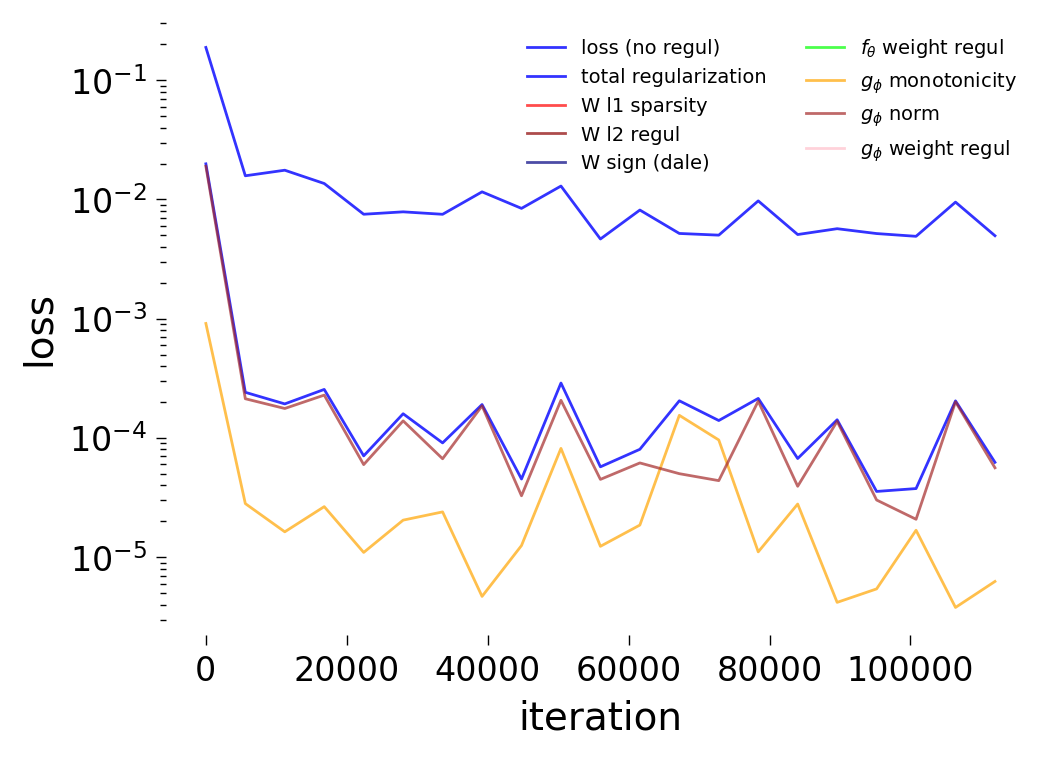

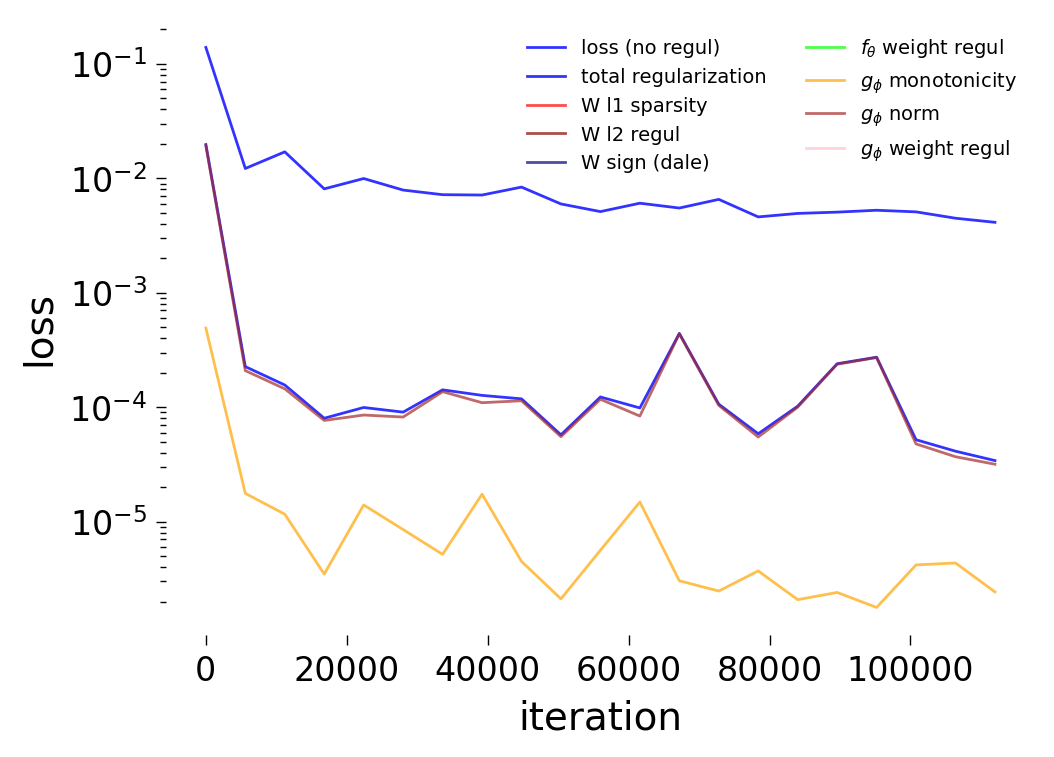

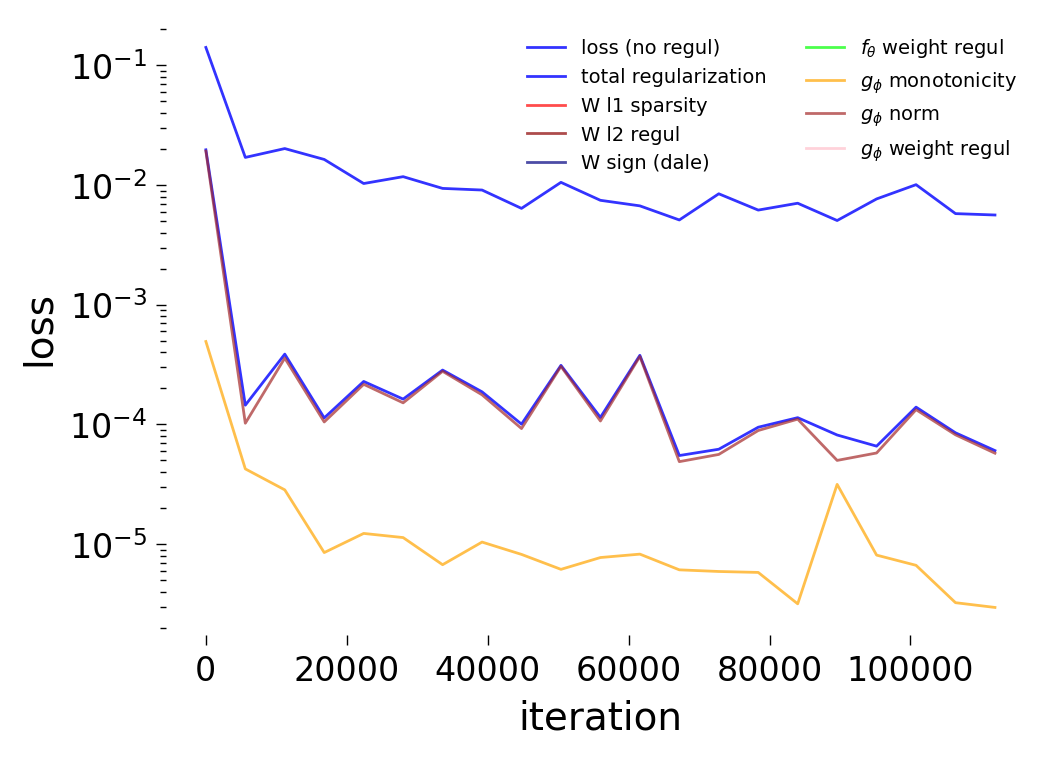

Loss Curves

Testing

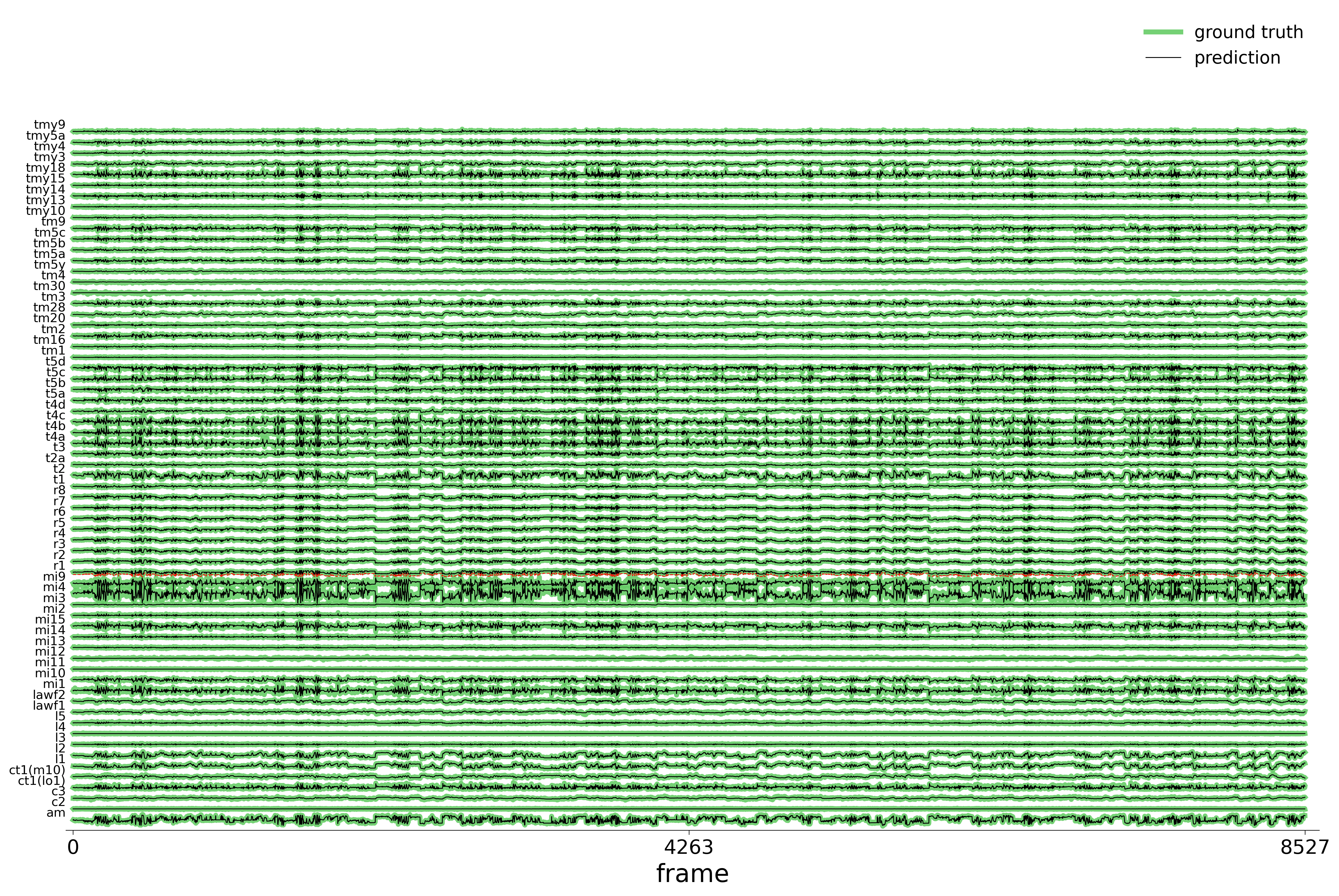

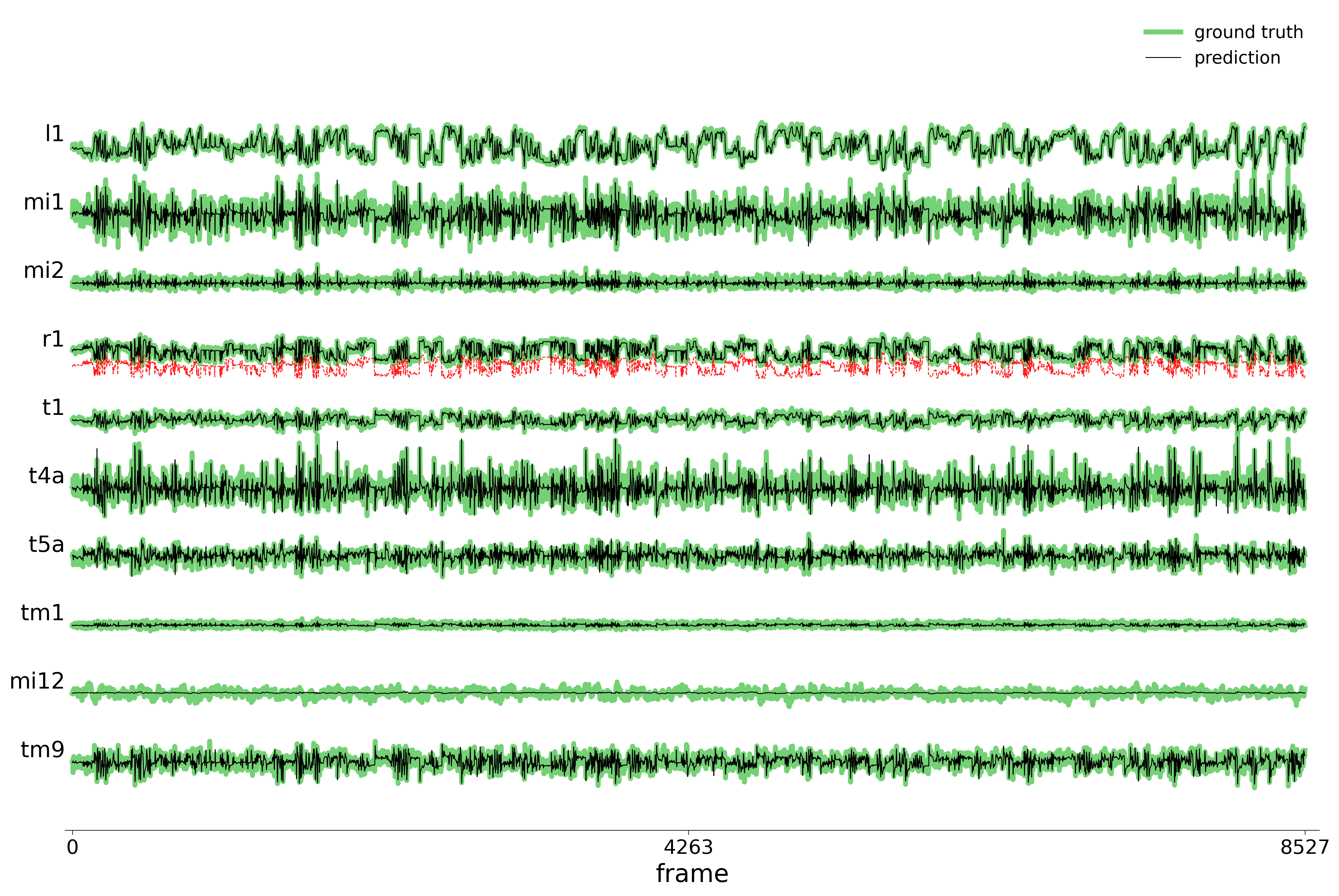

We evaluate each trained model on held-out stimuli and compute rollout predictions.

Code

for config_name, table_label, label in datasets:

config = configs[config_name]

gnn_log_dir = log_path(config.config_file)

print(f"\n--- Testing {label} ---")

data_test(

config=config,

visualize=True,

style="color name continuous_slice",

verbose=False,

best_model='best',

run=0,

step=10,

n_rollout_frames=250,

device=device,

)Rollout Traces

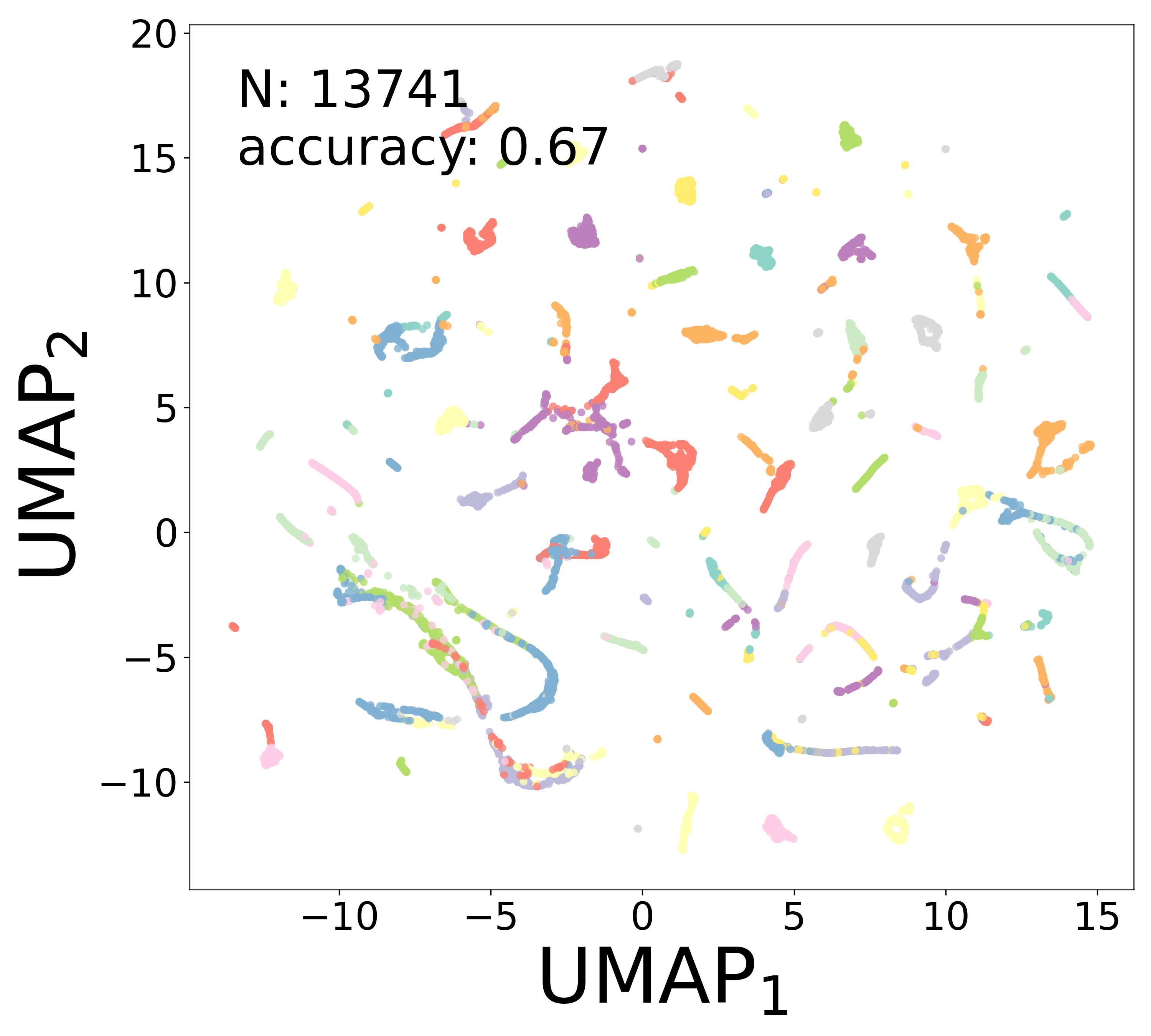

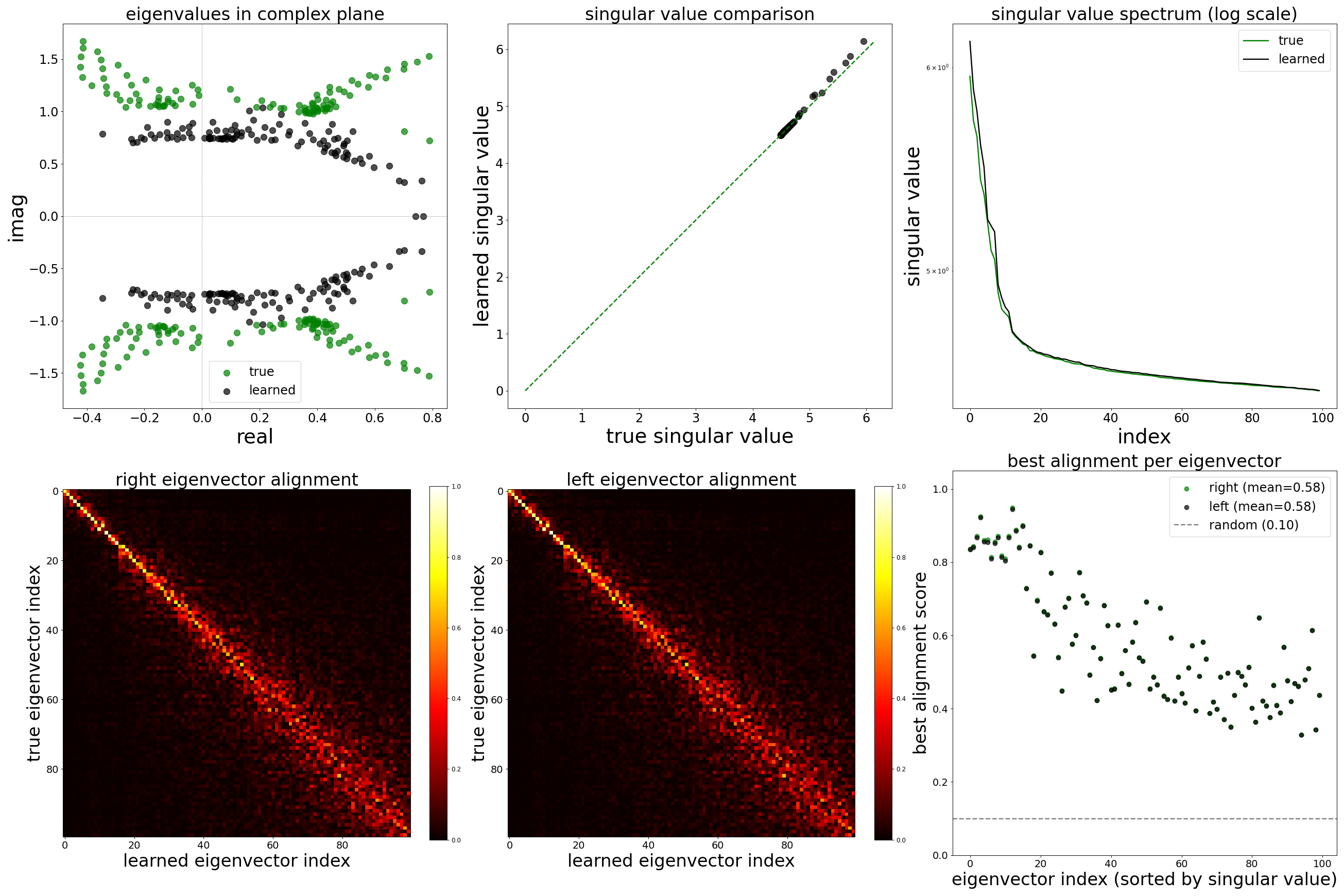

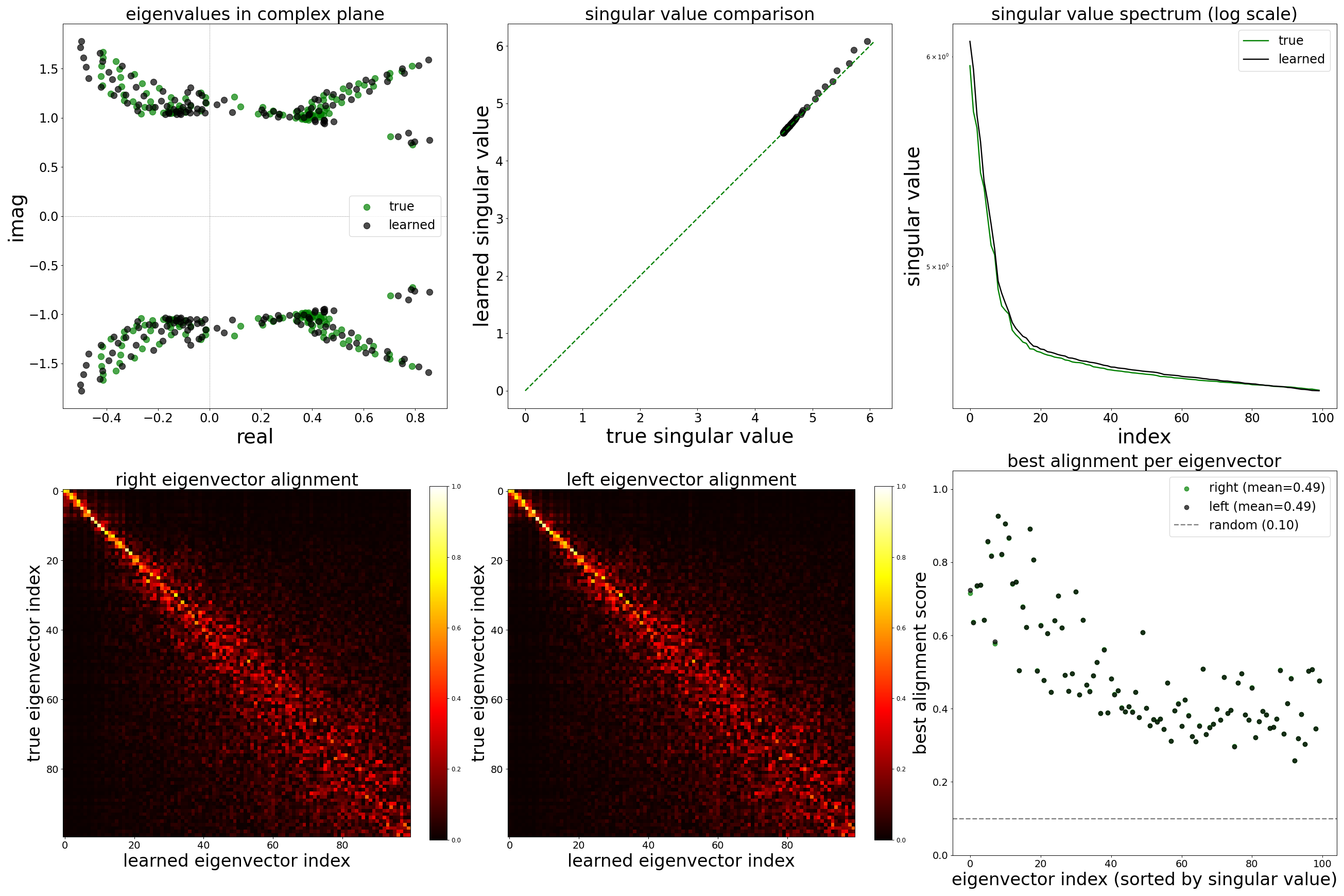

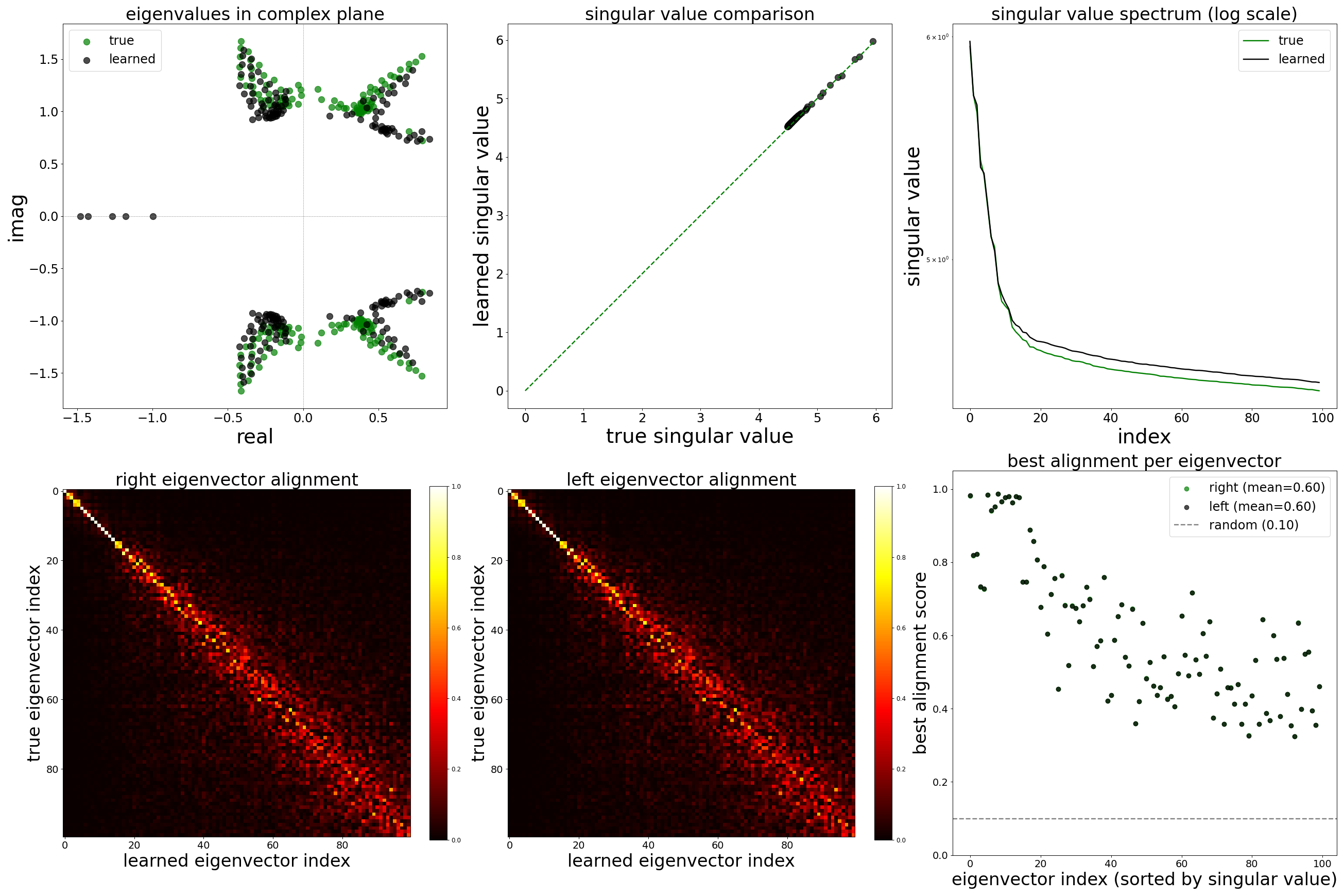

GNN Analysis: Learned Representations

We run the same analysis as Notebook 04 on each null-edge model to assess whether circuit recovery is preserved despite the corrupted adjacency matrix.

Code

for config_name, table_label, label in datasets:

config = configs[config_name]

print(f"\n--- Generating analysis plots for {label} ---")

data_plot(

config=config,

config_file=config.config_file,

epoch_list=['best'],

style='color',

extended='plots',

device=device,

)Corrected Weights (\(W\))

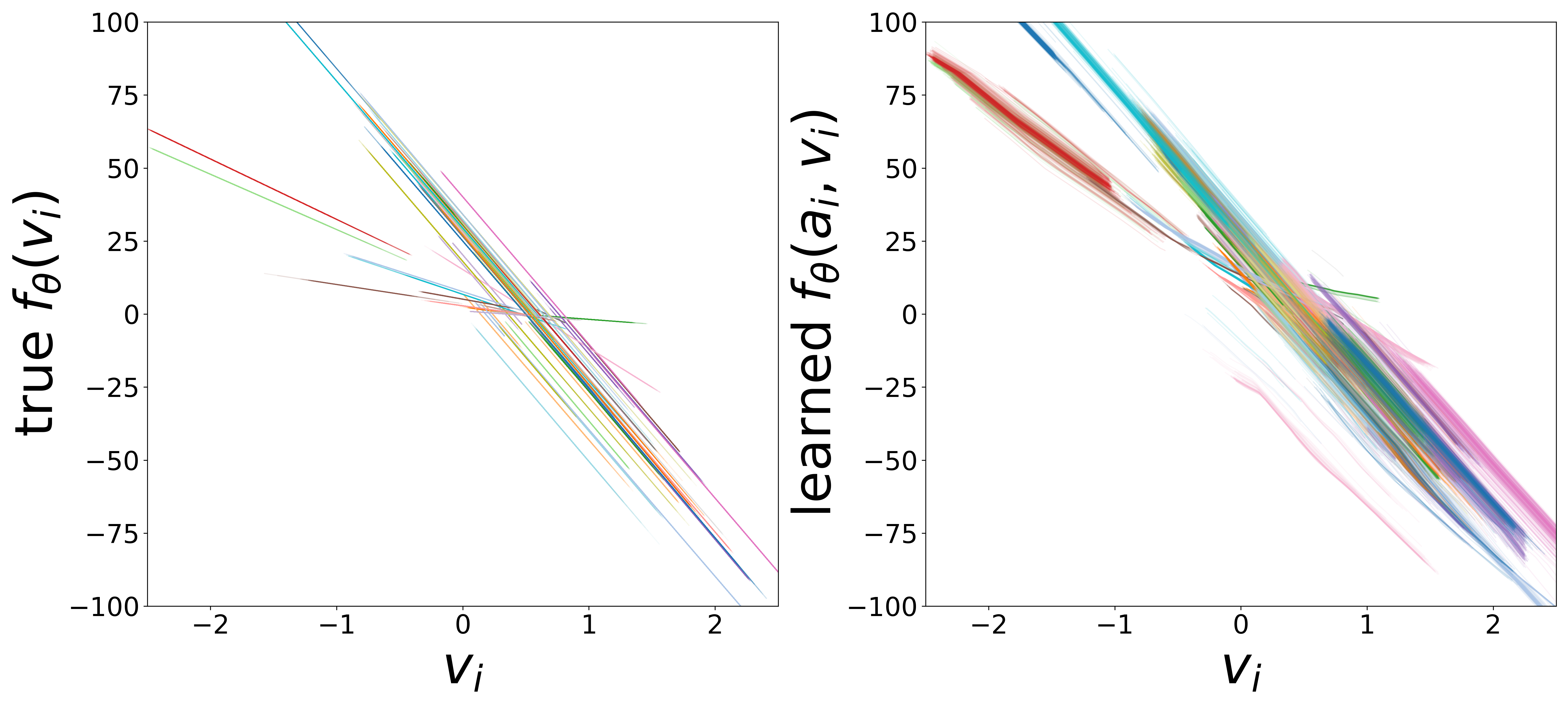

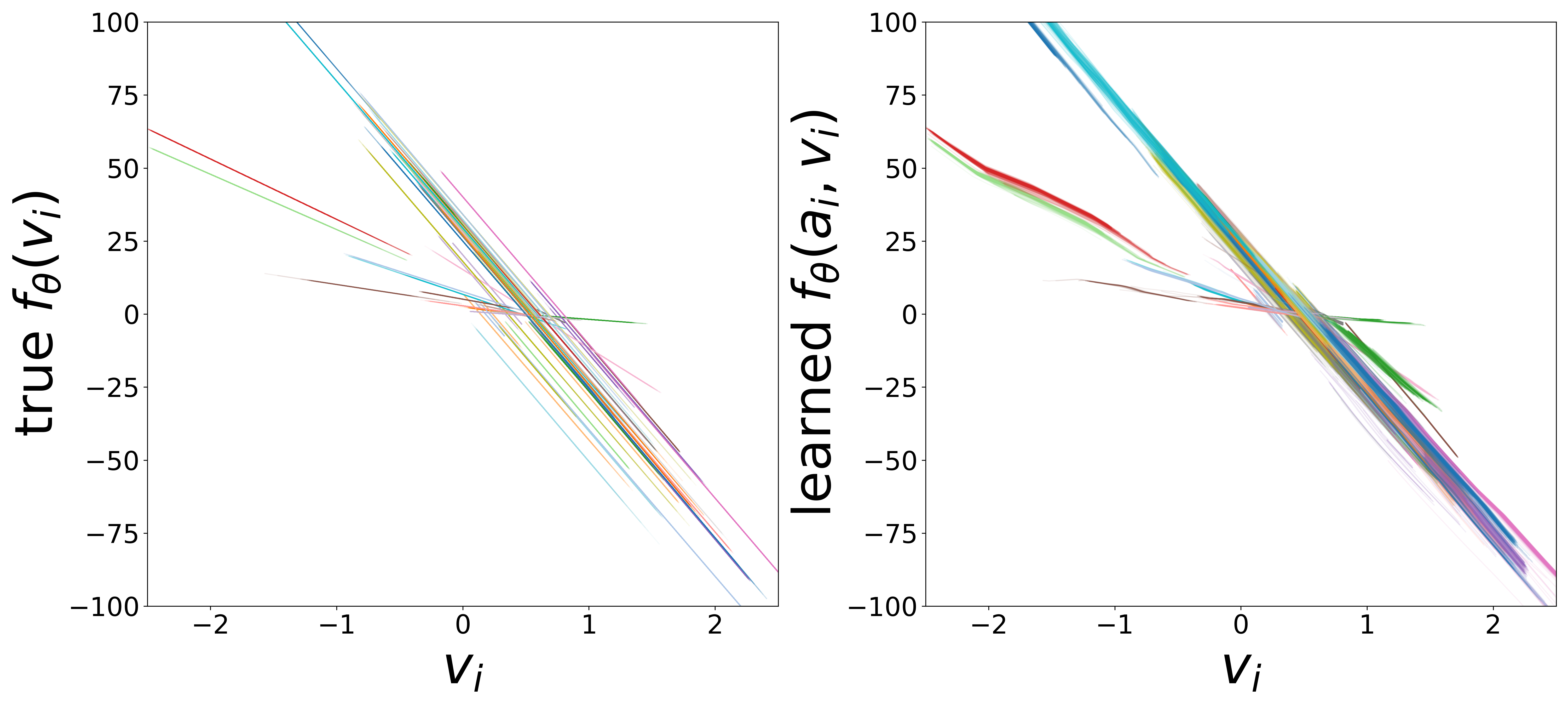

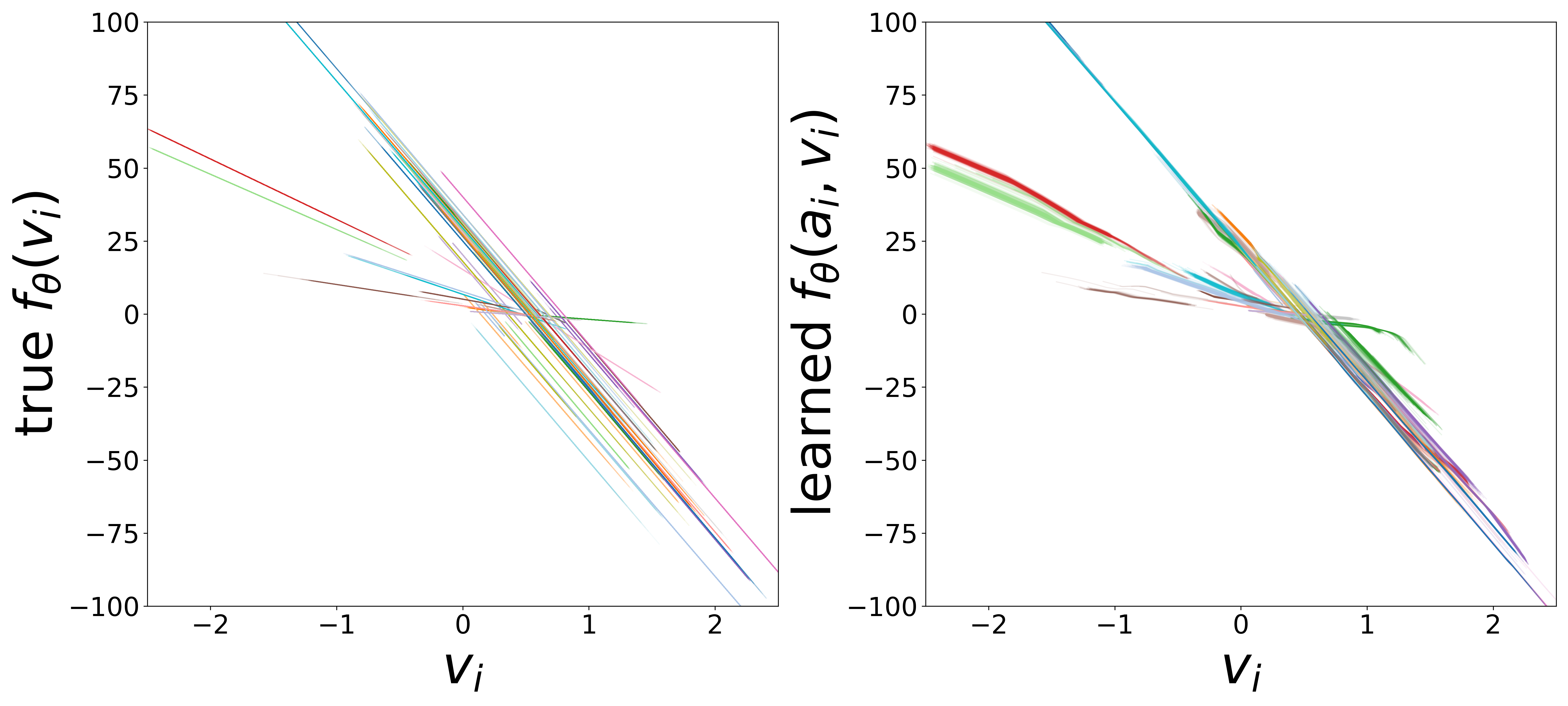

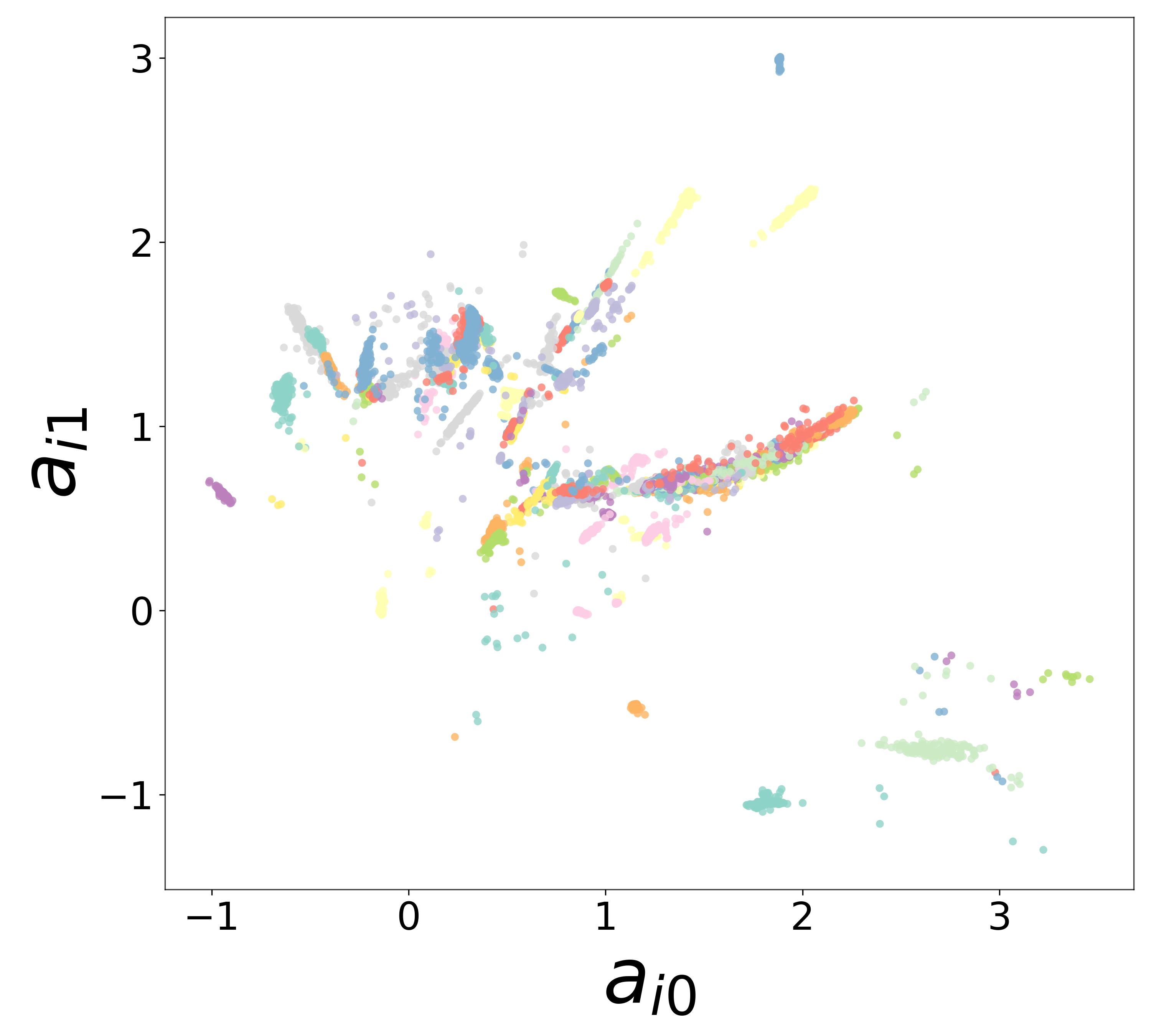

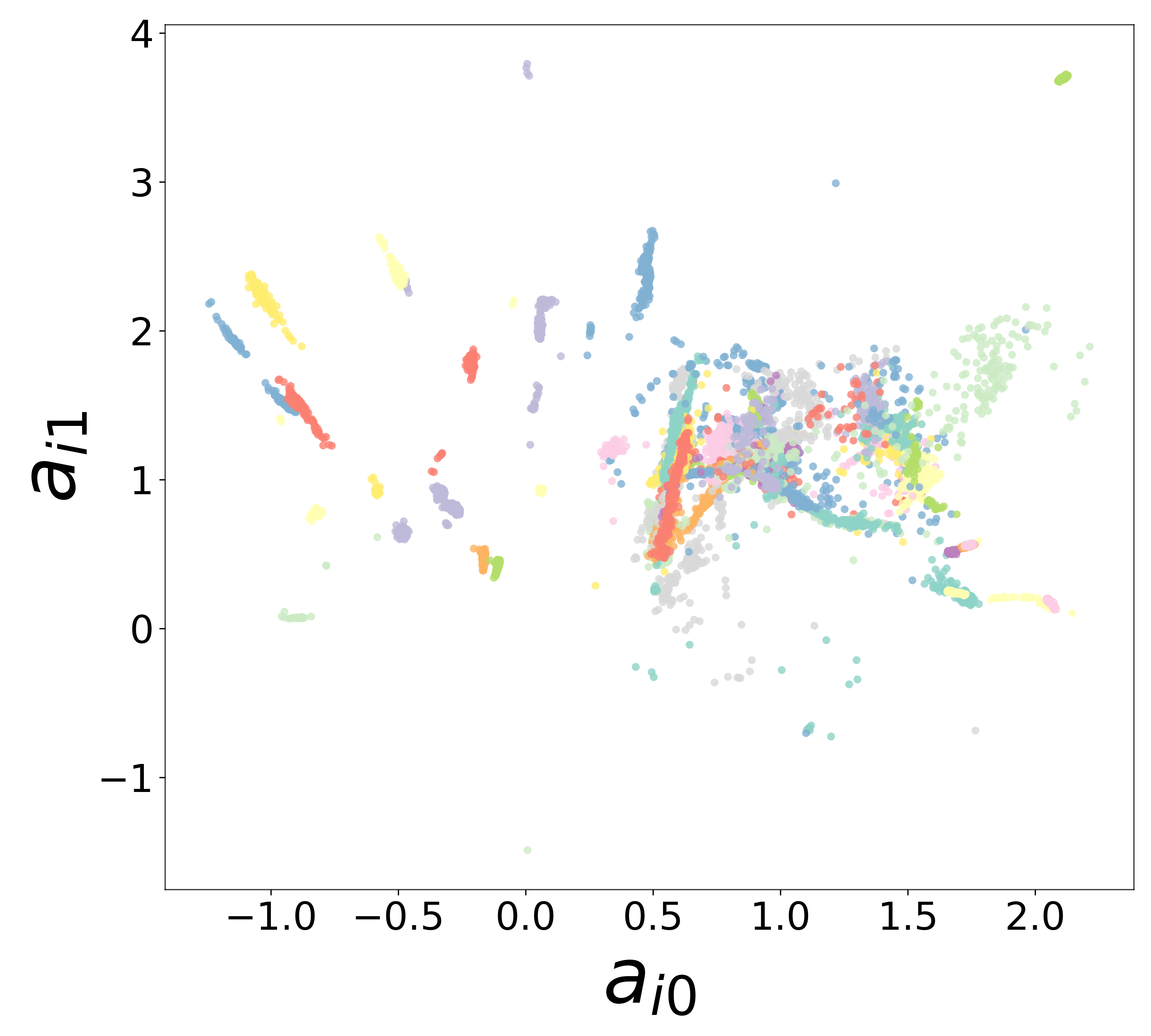

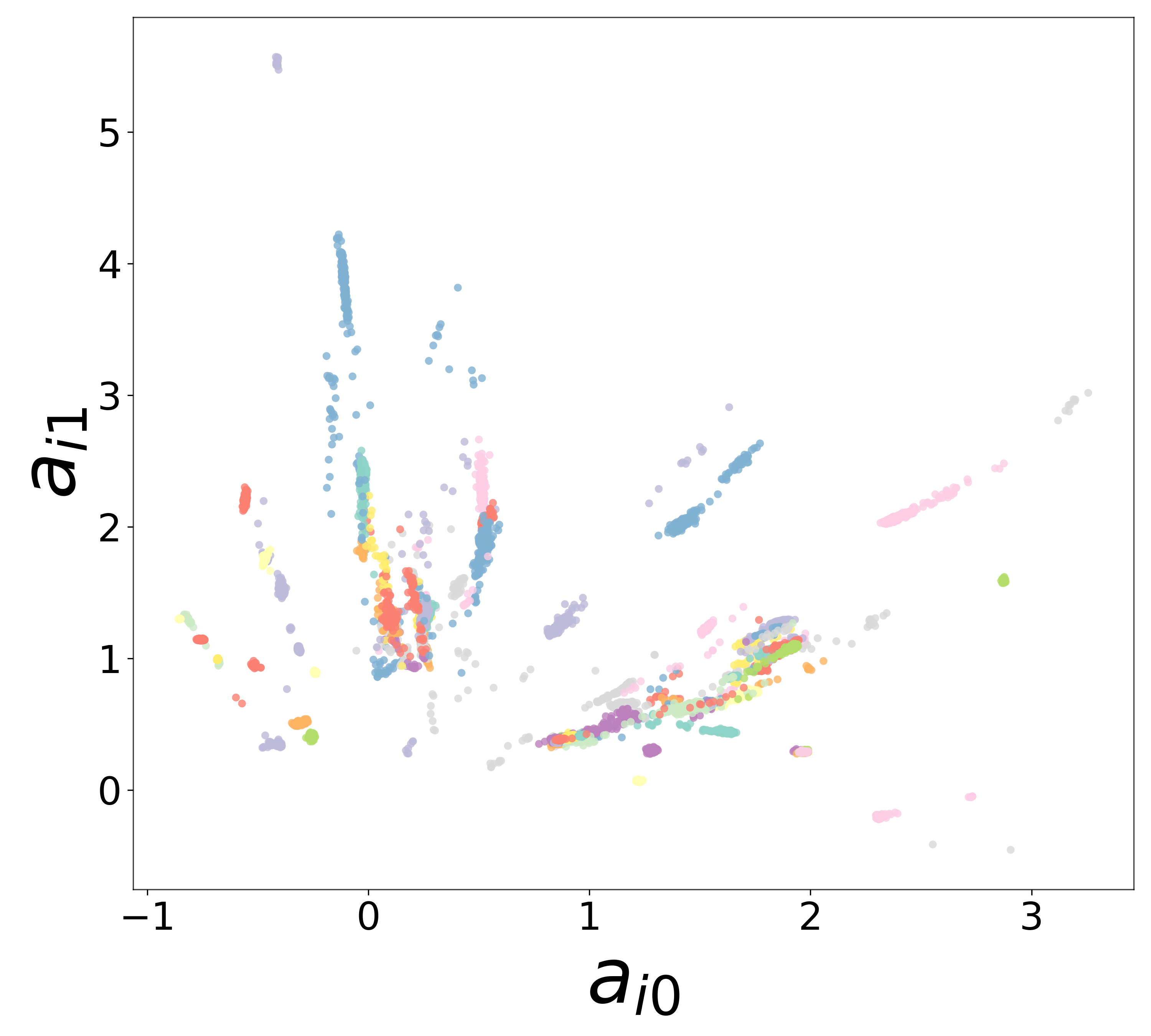

\(f_\theta\) (MLP\(_0\)): Neuron Update Function

Time Constants (\(\tau\))

Resting Potentials (\(V^{\text{rest}}\))

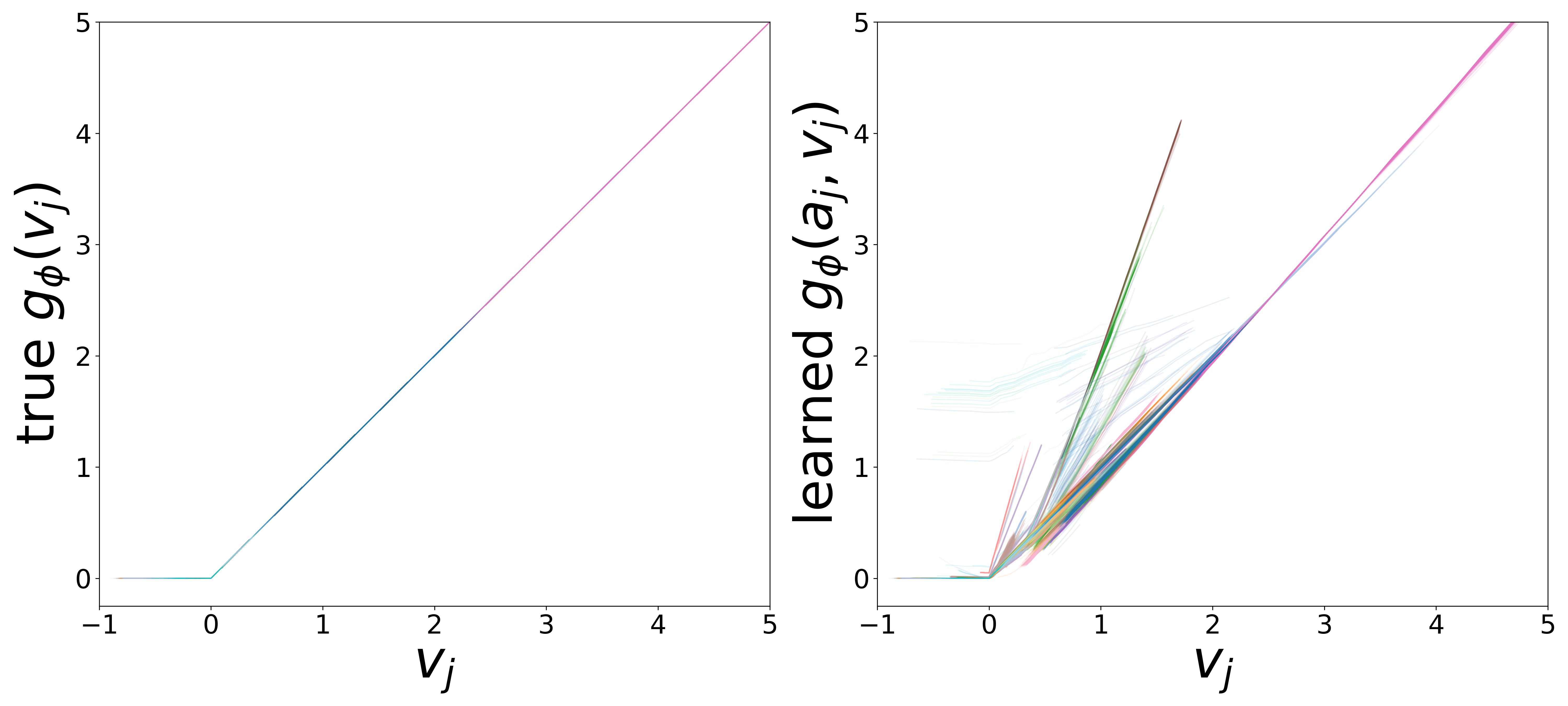

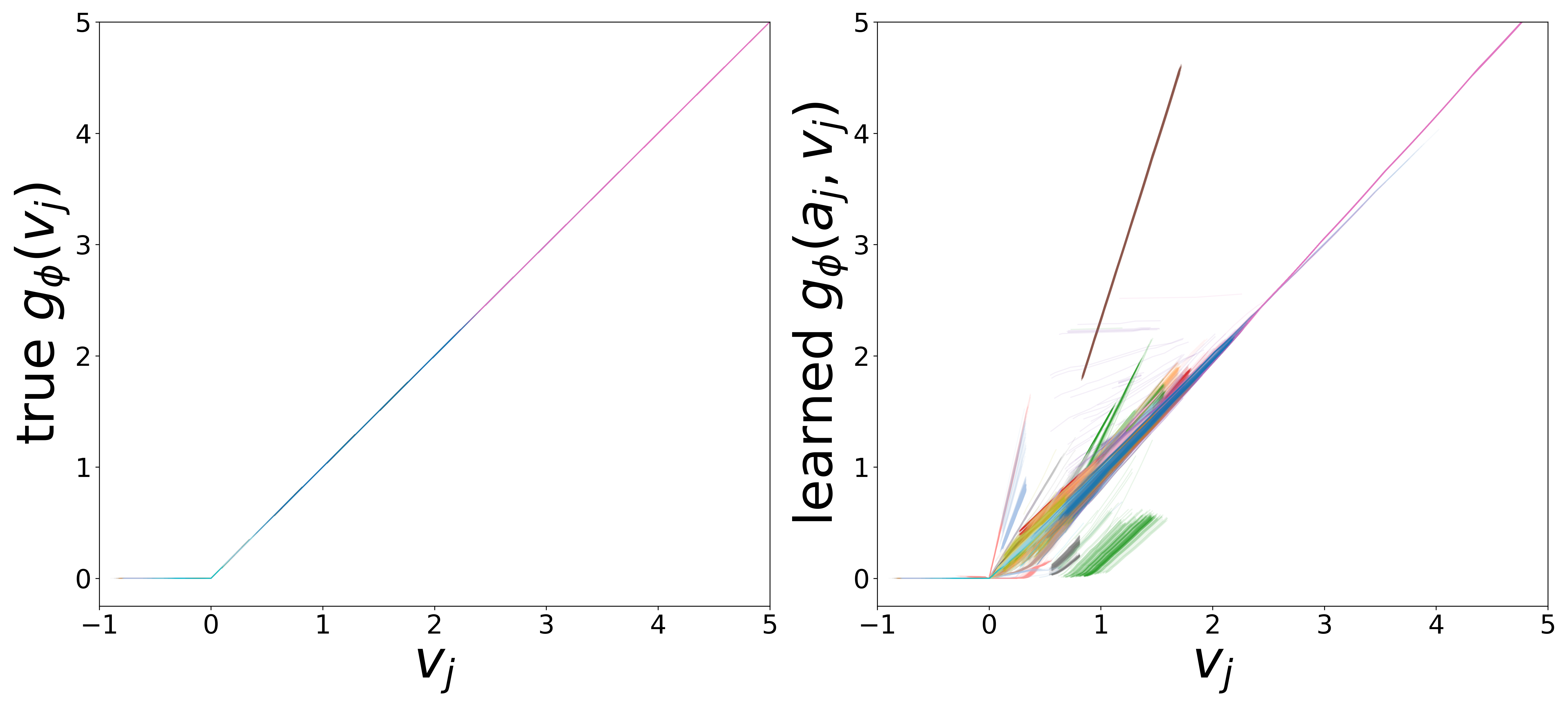

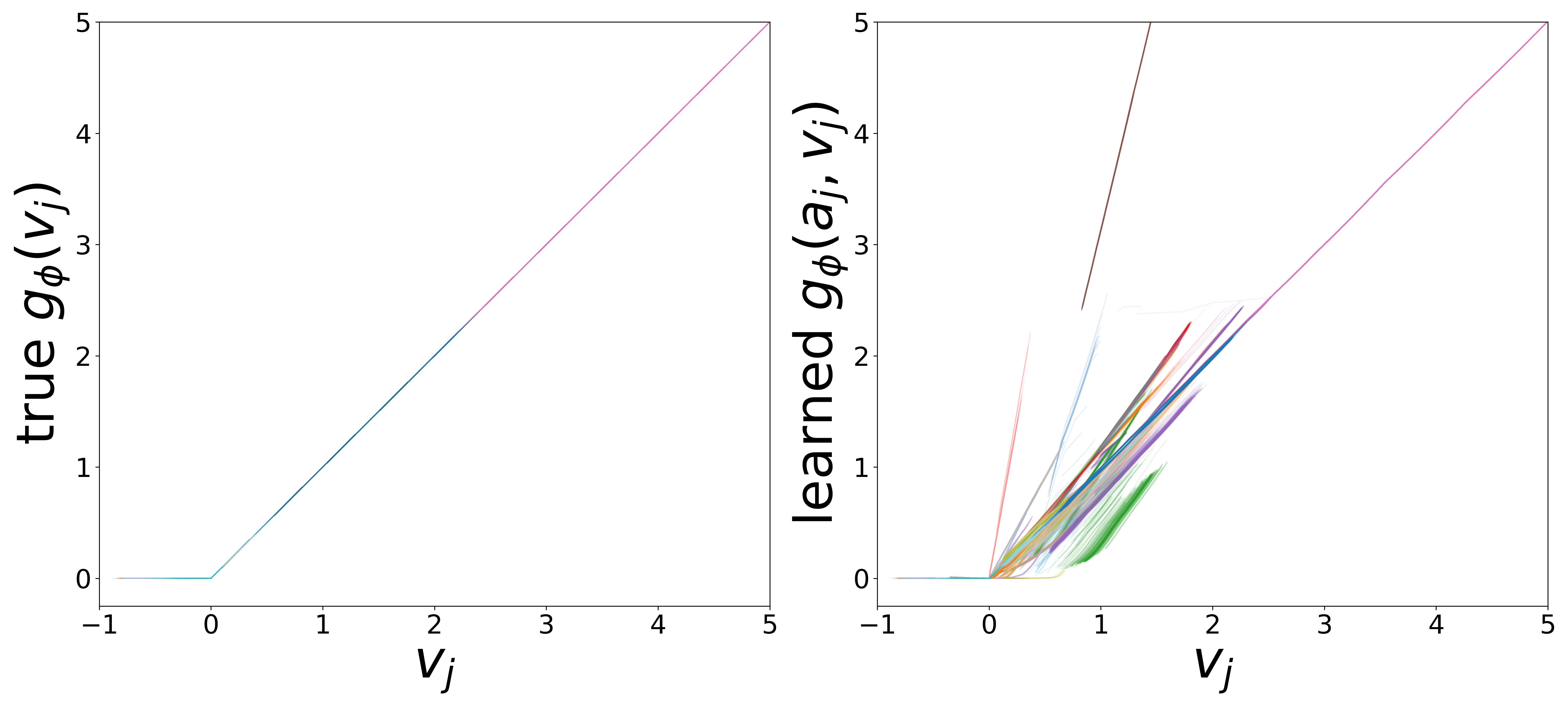

\(g_\phi\) (MLP\(_1\)): Edge Message Function

Neural Embeddings

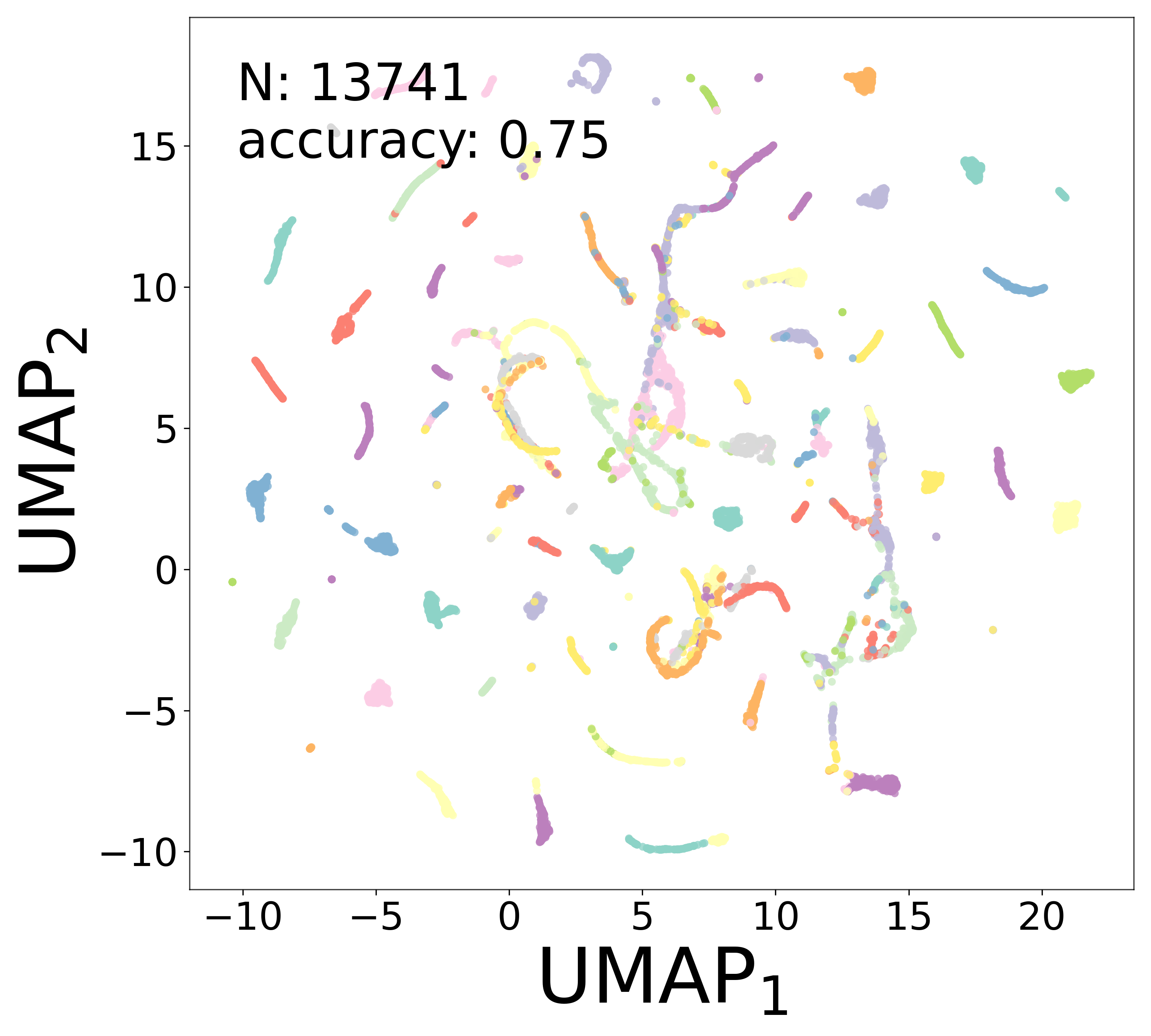

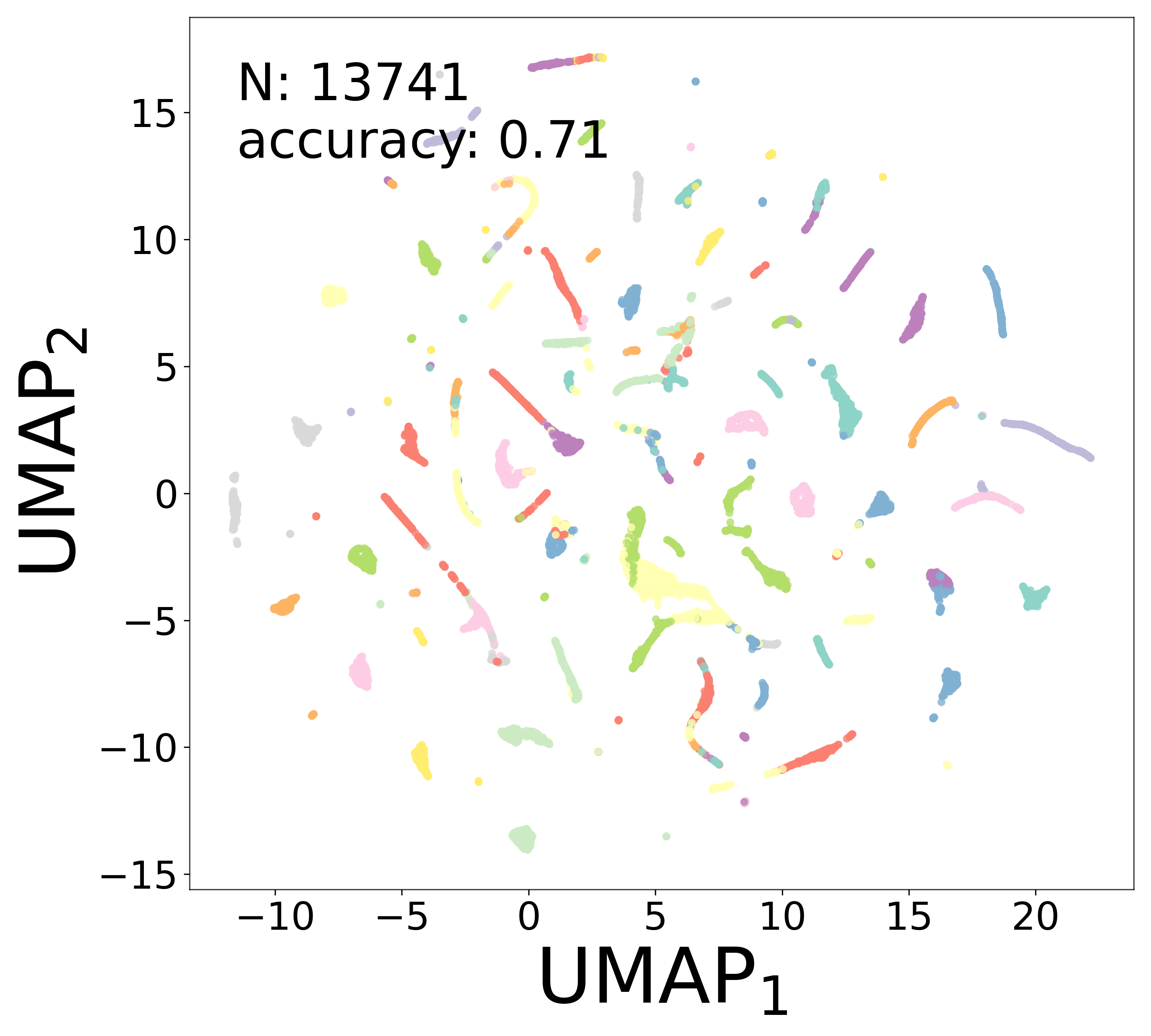

UMAP Projections

Spectral Analysis

Rollout Metrics

| Metric | 100% | 200% | 400% |

|---|---|---|---|

| RMSE | 0.1086 +/- 0.0568 | 0.1080 +/- 0.0564 | 0.1083 +/- 0.0568 |

| Pearson r | 0.779 +/- 0.237 | 0.780 +/- 0.237 | 0.779 +/- 0.239 |

References

[1] J. K. Lappalainen et al., “Connectome-constrained networks predict neural activity across the fly visual system,” Nature, 2024. doi:10.1038/s41586-024-07939-3

[2] C. Allier, L. Heinrich, M. Schneider, S. Saalfeld, “Graph neural networks uncover structure and functions underlying the activity of simulated neural assemblies,” arXiv:2602.13325, 2026. doi:10.48550/arXiv.2602.13325